Serverless Framework on AWS

Recently I’ve been using Serverless Framework to manage CloudFormation stacks and the serverless functions that run on them. In this post I’ll demonstrate how Serverless makes it easy to to deploy cloud resources and integrate them with code.

Overview and Approach

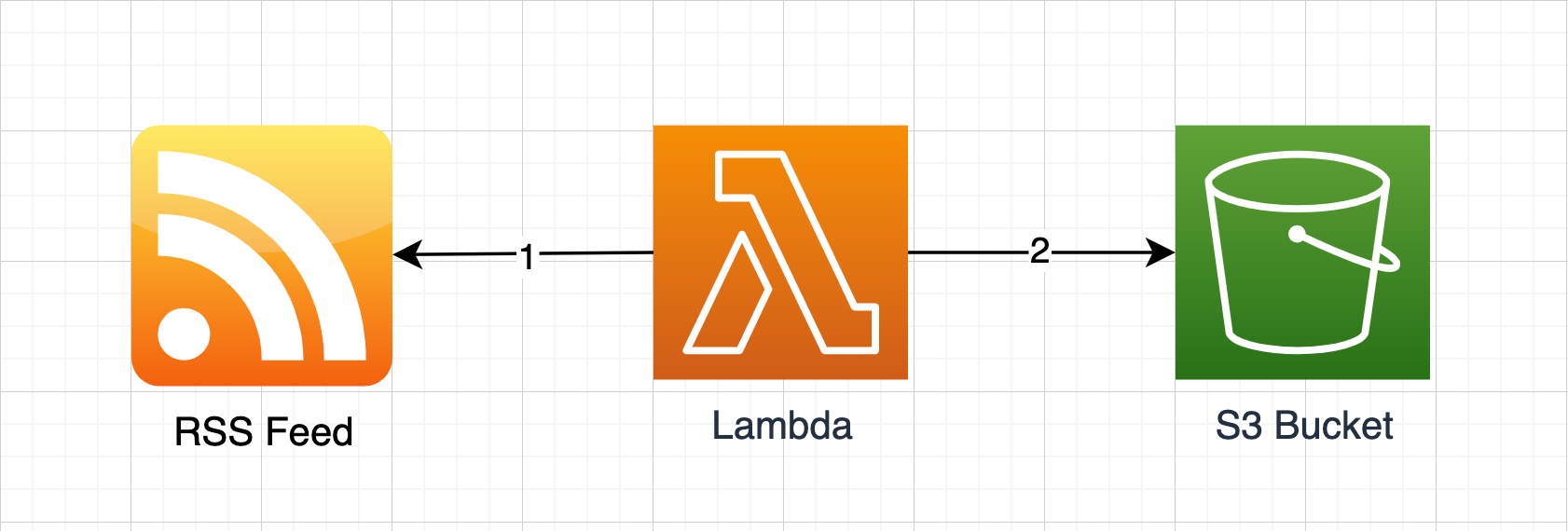

I will create a small workflow that peridocially reads from an external RSS Feed and saves the result in an S3 Bucket. The workflow will be triggered every hour. I’ll add each component to the solution separately and confirm it works before moving onto the next component. The source for the project is here.

Installation

Serverless needs to be installed globally as we’ll be invoking it from the commandline.

npm i -g serverlessAfter installing serverless we can create our project using the aws-nodejs-typescript template. Using this approach has the benefit of our configuration being serverless.ts rather than the traditional serverless.yml. This means we get typed-checked config, intellisense, and we don’t need to worry about YAML’s annoying indentation.

serverless create --template aws-nodejs-typescript --path headlines

cd headlines

npm iBy default serverless will deploy to us-east-1, so we should set a region in config if we want to deploy to a different one. In the upcoming serverless v3, configuration errors will throw, but in v2 they are warnings only. We can make v2 halt on bad config by adding configValidationMode. Finally, AWS Lambda will by default hold older versions of our functions every time we deploy. We can remove versioning functionality all together by using the versionFunctions property.

configValidationMode: "error",

provider: {

name: "aws",

region: "us-west-2",

versionFunctions: false,Provided we have cached credentials already configured in our aws-cli we can deploy this stack to aws with:

sls deployThe result is an api endpoint we can immediately hit with our favorite REST client!

{

"message": "Go Serverless Webpack (Typescript) v1.0! Your function executed successfully!"

}Adding Our Own Function

The template added an API Gateway via the http event attached to the function. We’ll remove this, and the associated apiGateway config. We can also change the function name to whatever we want - i’ve used parseFeed, change the location of the source, and reguarly trigger the function using the Schedule event.

functions: {

parseFeed: {

handler: "src/parseFeed.handle",

events: [

{

schedule: {

rate: "rate(1 hour)",

},

},

],

},

},After adding rss-parser to our package.json we can implement our function to make calls to an RSS feed.

export const handle: ScheduledHandler = async () => {

const feed = await new Parser().parseURL(

"http://feeds.bbci.co.uk/news/world/rss.xml"

);

console.log(`Retrieved ${feed.items.length} items from ${feed.title}`);

};Deploy again with sls deploy and we’ll see the API Gateway has been removed, and lambda scheduled hourly. Hitting test on the lambda in the AWS Console reads the feed and logs Cloudwatch.

Adding An S3 Bucket

Serverless resources for AWS accepts any raw CloudFormation template syntax, so we can add an S3 bucket similar to how we would using CloudFormation. We can use the custom properties to set variables that we’ll refer to throughout the configuration, as seen below for bucketName.

custom: {

bucketName: "headlines-${sls:stage}",

},

resources: {

Resources: {

Bucket: {

Type: "AWS::S3::Bucket",

Properties: {

BucketName: "${self:custom.bucketName}",

},

},

},

},We’ll need to expose bucketName to our parseFeed function and we can do so by adding to the environment property for the function.

functions: {

parseFeed: {

environment: {

BUCKET_NAME: "${self:custom.bucketName}",

},

...We can add via npm and extend our function to write the feed data to S3:

export const handle: ScheduledHandler = async () => {

const feed = await parseFeed();

await persist(feed);

};

const parseFeed = async () => {

const feed = await new Parser().parseURL(

"http://feeds.bbci.co.uk/news/world/rss.xml"

);

console.log(`Retrieved ${feed.items.length} items from ${feed.title}`);

return feed;

};

const persist = async (body: any) => {

const date = new Date();

const key = `${date.getFullYear()}/${

date.getMonth() + 1

}/${date.getDay()}/${date.getHours()}${date.getMinutes()}.json`;

await new S3Client({ region: process.env.AWS_REGION }).send(

new PutObjectCommand({

Bucket: process.env.BUCKET_NAME,

Key: key,

Body: JSON.stringify(body, null, 2),

})

);

console.log(`Saved ${key}`);

};After sls deploying this, our solution is complete!

Improving The Development Experience

Pushing the entire stack every time we edit the code is a frustrating and time consuming development cycle. We can improve on this by only deploying the function. Once our function has been deployed for the first time, we can deploy only the function by using:

sls deploy function -f parseFeedThis is much faster as it only bundles and deploys that single function. Faster again, is to host the function locally using the serverless-offline plugin. To install it, run the following…

npm install serverless-offline --save-dev…and add the plugin in serverless.ts:

plugins: ["serverless-webpack", "serverless-offline"],Bring serverless up in offline mode by running the following:

SLS_DEBUG=* serverless offlineThis will print the local endpoints that are hosting our function. We can hit these endpoints with Insomnia or Postman to trigger the function. Offline mode also watches our source, so any change to the function will be immediately reflected next time we POST on the endpoint without any need to re-deploy.

Finding The Spec

I found the serverless docs a little light on detail until I understood how to find the serverless.yml spec for each provider. The path on their website to the aws docs for example is Docs > Framework > Overview > Provider CLI References > AWS > User Guide > Serverless.yml - you’ll need to scroll to the bottom of the User Guide sub-menu to find Serverless.yml.

Links

In my next post on Serverless I take a look at logging, function invocation and permissions.