Quality and Suffering in Software Delivery

The way I deliver software is grounded in a belief that quality matters and is the route to less suffering. After building software for a long time my approach has become tacit knowledge - helpful in work I’m directly involved with but elusive to others, and more recently, AI. I need to write it down but I’ve been extremely good at allowing the annoying complexity to keep me procrastinating. For every guideline I have, I can think of exceptions and a context where it doesn’t apply. But the efficiency of having it written down is now higher than ever - not only can it help me clarify my thinking, and you to understand my approach, but I can use it to build or evaluate AI tooling to help teams deliver software better and faster than ever before.

Software delivery is full of decision making, and as long as you often make the right decisions, then the suffering can be mostly avoided. However it turns out that making the right decisions is really hard, and it’s much easier, tempting, and at times incentivised, to make the wrong ones. Over time these wrong decisions compound and teams are plunged into unnecessary suffering. Sometimes the decisions are forced on them by elements outside their control, but often it’s self inflicted. Sometimes wrong decisions are made due to ignorance, or miscommunication, or misunderstanding.

Collaborative delivery is complex. A bunch of individuals with different perspectives and expertise and personalities need to align on a shared goal and work together to achieve it. But this person didn’t understand that person even though both people thought they did. Now some people have put a bunch of effort into solving the wrong problem and a bunch of other people are saying that effort was wasted and we’re frustrated and demotivated and at this stage probably largely dysfunctional. If this sounds like the suffering you’ve experienced then wouldn’t it be great if there was a way to avoid it?

The good news is that all you need to do is ensure that everyone understands each other, and together, do as little as possible. The bad news is that it’s very hard to do so and intuition (wrongly) advises not to.

My approach to Software Delivery

At a high level my approach is a focus on quality in the process of delivery, how we build software, and how the sofware is, or will be, used. Going deeper I see a cross-functional team that collaboratively iterates software towards some goal. The team engages stakeholders and subject matter experts and assimilates them if necessary. They design solutions and build and operate systems that amplify the stakeholder’s capabilities. The team discerns from noise of many possible directions the right direction to take and validates their understanding with experiments performed in prod. The team delivers in small increments and gets feedback early and often. They use the feedback to adapt and improve their approach. They automate everything they can and continuously improve their ways of working.

An acceptable level of quality is determined and maintained, and as the team improves their quality expectations do also. The environment that they operate in however will constantly challenge the team’s commitment to quality. An irrational barrage of arguments to reduce quality will be presented to the team regularly. The increasing complexity of system scope and the endless demands of changing stakeholders will require resilience and discipline to maintain quality. Or, put another way, suffering will constantly try to creep in and the team must constantly fight it off.

In software delivery, as quality increases, suffering decreases.

Why Quality?

For a while, I struggled with whether quality really mattered. I read, and hated, yet continued to ponder, Zen and the Art of Motorcycle Maintenance. I joined teams that hadn’t focused on quality and helped them out of their suffering. But I left those same teams and saw quality slip. I thought about all the teams that delivered software without me. I ranted about quality and why we should automate everything and why we should understand each other better. I thought about the successful outcomes I led teams to deliver before I focused on quality. Maybe I was scared to speak up against suffering. Certainly my tolerance for it was higher back then. Ultimately I concluded that you don’t need quality to deliver software. It’s just faster, and less painful, and easier, and more fun, if you do.

My preference is to avoid suffering and quality is the way to do that. Sometimes it’s easier to understand quality as a requirement when contrasted with results that are lacking in quality. Non-intuitively, when speed matters (and it rarely doesn’t), disregarding quality to arrive faster seems like an easy and almost free decision to make. Confusingly, the effect of low quality is not often immediate and so the decision to sacrifice quality for speed appears to payoff in the short term. But with each shortcut taken, the system marches further towards the big ball of mud, gradually becoming harder and slower to change, and suffering becomes the norm.

Even in systems that were initially built with quality in mind can deteriorate over time without continued attention to quality. Decisions to outsource development to cheaper providers, or to lower the bar for hiring developers, or to generate code using AI tools without steering move us further into suffering because the quality expectations are lowered. It’s possible to have these approaches work but only by including quality as a first class concern. But quality itself is a complex topic, difficult to define and communicate, and heavily dependent on context and capability - both of which change over time.

What is Quality?

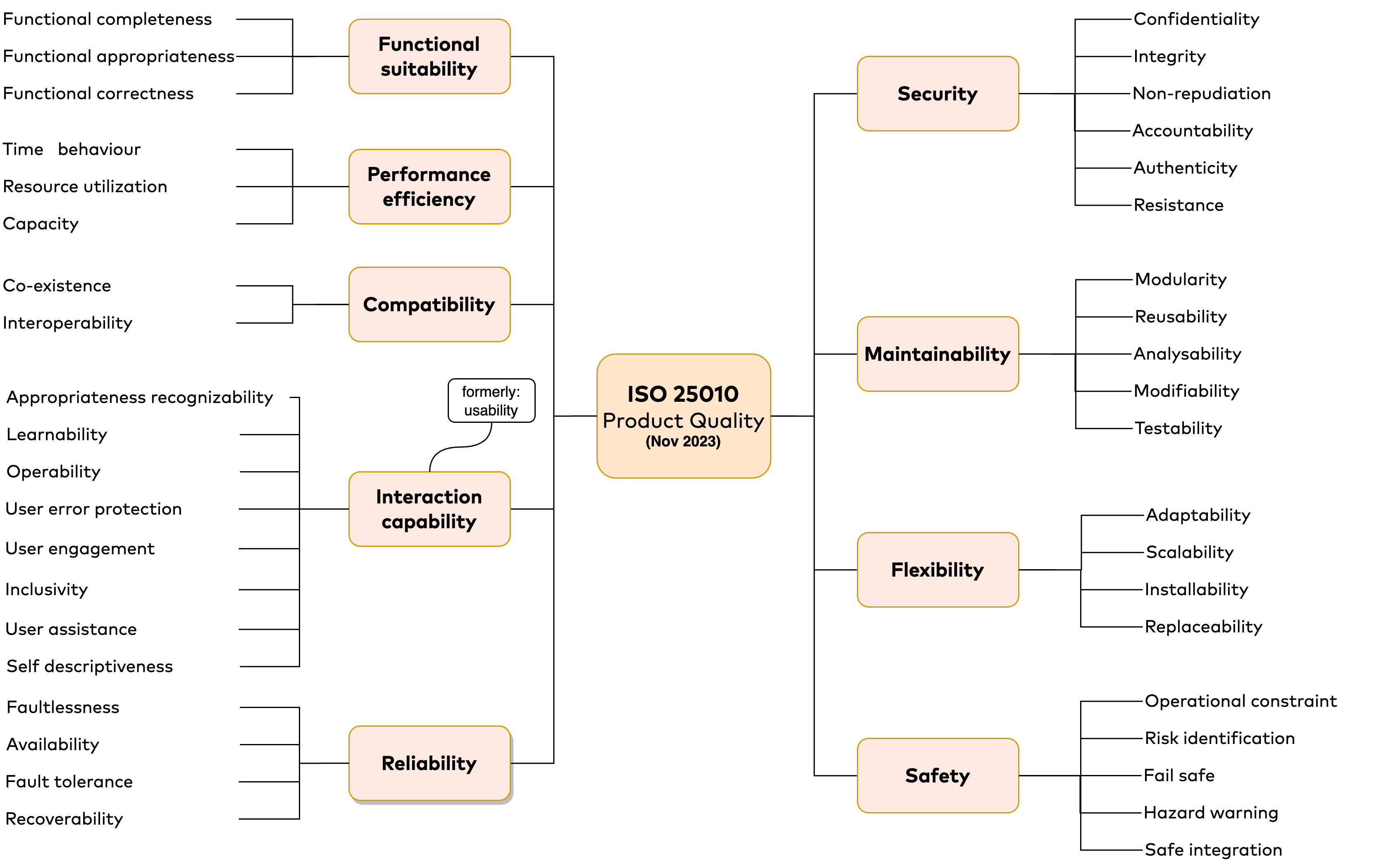

While subsequent posts will detail how to achieve quality within my particular approach to software delivery, I pondered first writing about what quality is and how it applies to software delivery in general. This was a mistake, as it appears we’ve been trying to define it for more than 50 years and still haven’t reached consensus. ISO 25010 is the latest International Standardized model for (software) product quality and ISO 25019 covers quality in use and a concise summary of both is available here.

ISO/IEC 25010:2023 Software Quality Model (source: quality.arc42.org, Dr. Gernot Starke).

See also, a summary of ISO-25010.

As both a programmer, and a leader of teams that deliver software, my interest also extends to the quality of the process by which software is produced. Developer Productivty for Humans, Part 7: Software Quality includes a Process Quality in their Four Types of Software Quality model:

A theory for how “software quality” is broken into four component types. The arrows represent the direction of influence: process quality is believed to influence code quality. C. Green, C. Jaspan, M. Hodges and J. Lin, Developer Productivity for Humans, Part 7: Software Quality, in IEEE Software, vol. 41, no. 1, pp. 25-30, Jan.-Feb. 2024, doi: 10.1109/MS.2023.3324830.

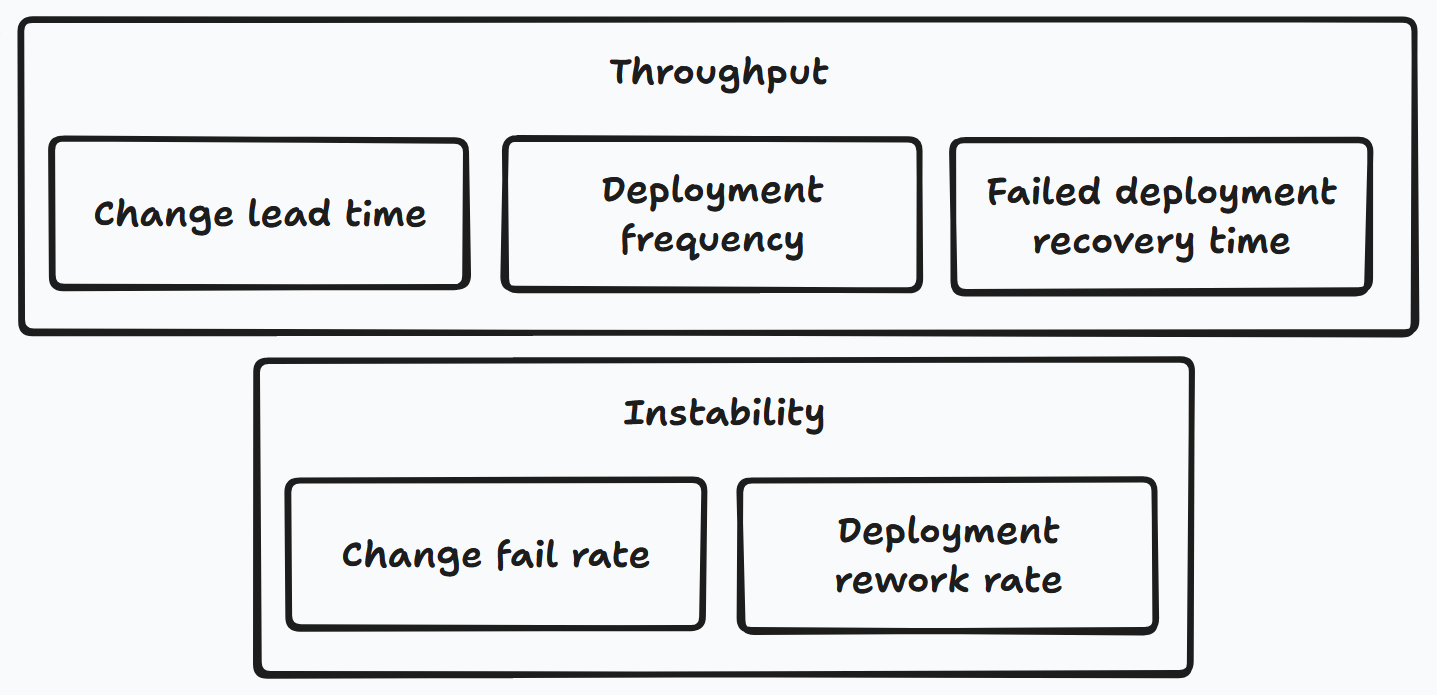

The DORA research program’s (previously four, but now) five metrics implicitly measure aspects of process quality across two categories: Throughput and Instability.

DORA’s software delivery performance metrics focus on a team’s ability to deliver software safely, quickly, and efficiently. They can be divided into metrics that show the throughput of software changes, and metrics that show instability of software changes.

The topic is dauntingly large, so large in fact, that I could dedicate this entire website to the topic alone and likely never cover it all. Instead, let me state three truths about quality that underpin my approach to software delivery:

- Quality should pervade all aspects of software delivery.

- The enforcement of quality should be as automated as possible.

- Quality is context dependent and the quality bar should be continuously raised.

My experience delivering software has shown me that guiding software delivery by these ideas leads to less suffering for all involved. But you don’t need to take my word for it. Many others have written extensively on this topic. Thanks to the internet I don’t need to continue down the rabbit hole of trying to define or argue for quality myself and can instead focus on illustrating how I apply it in practice. For now, I’ll leverage the vast body of existing work on the matter, curated by Claude Opus 4.5. The following is an excellent starting point for delving into what quality is and whether it matters. The summary is a good read, though you can skip to the source articles and drink straight from the well.

Why Quality Matters

Quality is not the enemy of speed—it is its enabler. Six decades of software engineering research and modern DevOps studies converge on a counterintuitive finding: high-quality software is cheaper and faster to produce over any meaningful timeframe. The DORA research program, studying over 31,000 professionals across six years, demonstrates that elite-performing teams excel at both speed and stability simultaneously—they are not tradeoffs. For organizations building software, this means that investing in quality is a strategic advantage, not a luxury. Yet context still matters: MVPs, prototypes, and time-to-market pressures create legitimate scenarios where quality decisions become nuanced economic calculations rather than moral imperatives.

This report synthesizes foundational works from authors like Martin Fowler, Gerald Weinberg, and Fred Brooks alongside recent practitioner research from DORA, Stripe, and leading engineering organizations to address what quality means for teams building software today.

The Foundational Case: Why Quality Matters According to the Pioneers

The software industry’s most influential thinkers reached remarkably consistent conclusions about quality, though from different angles. Martin Fowler articulates the economic argument most directly in his article “Is High Quality Software Worth the Cost?”:

High internal quality reduces the cost of future features, meaning that putting the time into writing good code actually reduces cost. The “cost” of high internal quality software is negative.

His “Design Stamina Hypothesis” suggests teams hit the crossover point—where quality investments start paying dividends—within weeks rather than months.

Ward Cunningham, who coined the technical debt metaphor in 1992, intended it as a communication tool for explaining to business stakeholders why code needed refactoring. His original insight deserves careful attention:

Shipping first time code is like going into debt. A little debt speeds development so long as it is paid back promptly with a rewrite… Entire engineering organizations can be brought to a stand-still under the debt load of an unconsolidated implementation.

Cunningham later clarified he was never in favor of writing code poorly—the debt metaphor was about understanding evolving systems, not about permission to cut corners.

Gerald Weinberg’s deceptively simple definition—“Quality is value to some person”—shifts focus from abstract metrics to stakeholder value. His Quality Software Management series and The Psychology of Computer Programming established that software development is fundamentally a human activity, pioneering concepts like egoless programming that underpin modern code review and pair programming practices.

Fred Brooks emphasized conceptual integrity as central to product quality, famously observing that “software work is the most complex that humanity has ever undertaken.” His warning about system entropy remains relevant: “As time passes, the system becomes less and less well-ordered. Sooner or later the fixing ceases to gain any ground.”

The quality management tradition brought complementary insights. Philip Crosby’s “Quality is Free” argued that prevention costs less than correction—a principle W. Edwards Deming extended with his fourteen points:

Organizations can increase quality and simultaneously reduce costs by reducing waste, rework, staff attrition, and litigation.

These manufacturing-derived principles translated directly to software through the agile movement.

The Costs of Poor Quality Compound Faster Than Most Expect

The business case for quality is built on hard numbers. The Consortium for Information and Software Quality (CISQ) estimates the cost of poor software quality to US companies at $2.41 trillion annually, with technical debt specifically accounting for $1.52 trillion. McKinsey research finds that technical debt consumes up to 40% of IT balance sheets and diverts 10-20% of technology budgets meant for new products toward resolving existing problems.

Developer productivity suffers dramatically. Stripe’s Developer Coefficient Report found that 42% of the average developer work week—17.3 hours of a 41.1-hour week—is spent on technical debt and dealing with bad code, representing approximately $85 billion in global opportunity cost annually. A longitudinal academic study by Besker, Bosch, and Martini found developers waste 23% of working time specifically due to technical debt. In almost 25% of cases when encountering technical debt, developers are forced to introduce additional technical debt simply because of existing debt—a compounding effect that mirrors financial debt dynamics.

The cost escalation curve for fixing defects is well-documented through IBM’s Systems Sciences Institute research. A bug caught at the design stage costs 1x to fix. At implementation, that rises to 6x. During testing, 15x. In production, between 30x and 100x. One practitioner formulation: €100 to fix during unit testing becomes €100,000 after release. Toyota’s 2010 anti-lock brake software bug, which led to recalls of over nine million vehicles at approximately $3 billion in cost, illustrates the extreme end of this curve.

Security vulnerabilities present significant risk. IBM’s Cost of Data Breach Report shows organizations face substantial breach costs, with smaller companies (under 500 employees) averaging $3.31 million. More starkly, 60% of small businesses that suffer a cyberattack go out of business within six months. The ThreatX survey found that 60% of consumers are less likely to work with a company that has suffered a data breach—reputation damage that persists for years.

Downtime costs are similarly severe: $137-$427 per minute for smaller organizations, scaling to $8,000-$25,000 per hour on average. Companies with frequent downtime have 16x higher costs than those with fewer outages.

High Performers Achieve Speed and Stability Together

The DORA research program (DevOps Research and Assessment), documented in the Accelerate book by Forsgren, Humble, and Kim, provides the strongest empirical evidence that quality and speed are not tradeoffs. According to Code Climate’s analysis of DORA metrics, teams excelling at these metrics are 2x more likely to exceed organizational performance goals and 1.8x more likely to report better customer satisfaction.

The four DORA metrics reveal this dynamic clearly. Elite performers achieve multiple deploys per day (versus monthly for low performers), lead times measured in hours or days (versus weeks or months), change failure rates of 0-15% (versus 46-60%), and recovery times in minutes or hours (versus days or weeks). The research shows organizations failing in one area typically fail in all four—success is correlated across both speed and stability dimensions.

As LinearB explains, these metrics help teams understand the relationship between speed and quality in their delivery pipeline. The DX guide to DORA metrics emphasizes that measuring these four key indicators helps organizations identify bottlenecks and improve both velocity and reliability simultaneously. The 2024 Accelerate State of DevOps Report continues to validate these findings while exploring how AI tools are affecting software delivery performance.

Customer experience research reinforces this connection. 91% of customers who had a bad experience will not do business with the same company again. 33% will switch to a competitor after a single bad experience. Conversely, customers rating their experience 10/10 spend 140% more and remain loyal up to six times longer. Businesses prioritizing exceptional customer service grow revenues 4-8% above market average.

Developer retention creates another feedback loop. Research from InfoQ shows that technical debt is fundamentally an organizational issue, not just a technical one. Studies on tech debt and morale indicate that 52% of engineers believe technical debt negatively impacts team morale. Developers working in complex, debt-ridden areas of code are 10x more likely to leave. Teams with high turnover accumulate 37% more technical debt and spend 22% more time debugging—creating a vicious cycle where poor quality drives away the people most capable of improving it.

When Quality Can Legitimately Be Deprioritized

Despite the strong case for quality, context matters. The Lean Startup methodology explicitly calls for minimum viable products that test business hypotheses with minimal investment. Eric Ries defines the MVP as “that version of a new product a team uses to collect the maximum amount of validated learning about customers with the least effort.” The purpose is market validation, not production-grade systems.

Steve McConnell of Construx identifies three strategic reasons for intentional technical debt in his whitepaper “Managing Technical Debt.” First, time to market:

When time to market is critical, incurring an extra $1 in development might equate to a loss of $10 in revenue.

Second, preservation of startup capital:

If it can delay an expense for a year or two, it can pay for that expense out of greater money later.

Third, system end-of-life: near the end of a system’s service life, it becomes increasingly difficult to cost-justify investing in anything other than what’s most expedient.

Richard Gabriel’s “Worse is Better” philosophy, articulated in 1989, observed that Unix and C succeeded over more “correct” alternatives precisely because their simpler implementations allowed faster spread and iteration. The core principle, also discussed in academic contexts and related to the principle of good enough: implementation simplicity should take priority over interface simplicity or completeness.

It is better to get half of the right thing available so that it spreads like a virus. Once people are hooked on it, take the time to improve it to 90% of the right thing.

Andy Hunt and Dave Thomas (The Pragmatic Programmer) bring practical wisdom about “Good-Enough Software”:

You say to a user, “You can have this software in two months with a few rough edges. Or, we can polish it to perfection, and you can have it in seven years. Which would you prefer?”

Their answer:

The scope and quality of the system you produce should be specified as part of the system requirements.

Quality becomes a requirement to negotiate, not an absolute standard. As discussed in the Pragmatic Programmer excerpt, users should be given the opportunity to participate in determining what level of quality is acceptable for their needs.

Martin Fowler’s Technical Debt Quadrant helps frame these decisions:

- Prudent and deliberate: “We must ship now and deal with consequences” — acceptable when consciously analyzed

- Prudent and inadvertent: “Now we know how we should have done it” — inevitable learning

- Reckless and deliberate: “We don’t have time for design” — avoid

- Reckless and inadvertent: “What’s layering?” — address through training

The key insight from his Technical Debt article: the difference between prudent and reckless debt is whether you’ve thoughtfully analyzed whether the payoff for earlier release exceeds the costs.

The Hidden Costs That Make “Temporary” Permanent

Counter-arguments deserve equal weight. Throwaway code rarely stays throwaway. Jeff Atwood observes:

Prototypes are designed to be thrown away. If you can’t bring yourself to throw the prototype away, then stop prototyping.

Developer attachment to prototypes can lead to “attempting to convert a limited prototype into a final system when it does not have an appropriate underlying architecture,” as discussed in the software prototyping literature.

MVP technical debt compounds dangerously. Poor quality implementation can invalidate MVP testing by introducing variables unrelated to core hypotheses. A low-quality MVP can negatively affect brand reputation or make people write off a good idea too soon. Competitors who see your MVP might “swoop in with something better, faster” if you’re slow to improve.

The speed illusion is particularly pernicious. As Fowler explains, his Design Stamina Hypothesis suggests teams hit the crossover point—where quality investments start paying off—within weeks rather than months. One practitioner analysis describes the endgame:

In advanced stages, technical debt takes a project hostage. Nobody wants to touch the project anymore. Features take ages to complete. The speedy start that was once so important for the project’s success has long been forgotten and, looking back, wasn’t that important after all.

Another perspective from CodeAhoy describes technical debt as “soul-crushing” for developers who must work within it.

The research on code quality and modification time is stark: low-quality code takes more than twice as long to modify and has 15× higher defect density according to studies by Tornhill and Borg.

Three Distinct Quality Dimensions That Interact Causally

Contemporary frameworks distinguish between three types of quality that, while related, address different concerns with different stakeholders.

Internal quality (quality of the software itself) encompasses code structure, architecture, modularity, technical debt levels, coupling/cohesion, and complexity. As Fowler explains, developers and architects care about this dimension; it’s invisible to end users but determines how easily the system can evolve. Mike Long’s analysis emphasizes that internal quality directly impacts the team’s ability to respond to change. ISO 25010 maps this primarily to Maintainability characteristics—modularity, reusability, analyzability, modifiability, testability.

External quality (quality of use) covers user experience, functional correctness, performance, reliability, and accessibility. Matti Lehtinen’s article on internal vs. external quality explains that end users and customers perceive this directly. ISO 25010’s updated 2023 standard addresses this through Functional Suitability, Interaction Capability (formerly Usability), Reliability, and Performance Efficiency characteristics, with a separate “Quality in Use” model measuring effectiveness, efficiency, satisfaction, and freedom from risk.

Process quality (quality of production) includes development practices, CI/CD pipeline health, testing practices, code review thoroughness, and documentation. Abi Noda’s Engineering Enablement newsletter identifies this as foundational: “process-based metrics are even more predictive of post-release defects than existing code quality metrics.”

Research from Google’s Engineering Productivity team establishes the causal chain: Process Quality → Code Quality → System Quality → Product Quality. Everything begins with high-quality development processes because higher process quality leads to higher code quality. This explains why the DORA research finds that elite performers excel across all dimensions simultaneously—they’ve invested in the foundational process layer.

Fowler’s key insight about the relationship:

Users and customers can see what makes a software product have high external quality, but cannot tell the difference between higher or lower internal quality.

Yet internal quality affects external quality over time—poor internal quality creates cruft that slows down feature development, eventually degrading the user experience through slower improvements and more bugs.

Modern Practices Embed Quality Throughout Delivery

Contemporary DevOps and continuous delivery practices operationalize these quality principles. Shift-left testing moves quality assurance activities earlier in the development lifecycle—from post-development validation to requirements and design phases. Organizations adopting shift-left report 30-40% increases in deployment frequency by eliminating bottlenecks.

Quality gates in CI/CD pipelines enforce standards automatically: static analysis for code quality and security vulnerabilities, unit and integration test coverage thresholds (80%+ recommended), dependency scanning, performance threshold validation, and DORA metrics monitoring. Netflix’s approach exemplifies production-oriented quality: chaos engineering through tools like Chaos Monkey proactively finds vulnerabilities, while their experimentation platform runs rigorous A/B testing on virtually every product change.

Technical debt management has matured with practical guidance. The CMU Software Engineering Institute recommends bringing visibility to instances of technical debt, making tradeoffs explicit, incorporating TD management into SDLC activities, and developing organizational support. Multiple sources converge on allocating 15-20% of sprint capacity specifically to maintenance and improvements.

Research on velocity versus technical debt shows that engineering leaders face a persistent dilemma in balancing short-term delivery pressure against long-term system health. One case study showed a company investing 25% of development capacity in platform improvements initially slowed feature delivery by 15%, but by year-end teams delivered features 40% faster.

The 2024 DORA report notes that platform engineering—building internal developer platforms that standardize quality practices—shows promise for larger organizations, though the report cautions that smaller organizations may find it counterproductive due to overhead. Pragmatic Coders’ guide to calculating tech debt costs provides nine specific metrics organizations can use to quantify their technical debt burden.

The Human Cost: Morale, Retention, and Organizational Health

Beyond the financial metrics, research on technical debt and developer morale reveals significant human costs. Engineers working in high-debt codebases report frustration, reduced job satisfaction, and a sense that their professional skills are being wasted on workarounds rather than meaningful work.

As The Pragmatic Programmer discusses, there’s a psychological dimension to quality: developers take pride in their work, and being forced to produce or maintain low-quality code conflicts with professional identity. The concept of “good enough software” isn’t about lowering standards—it’s about making conscious, collaborative decisions about where quality investments matter most.

Making Quality Decisions: A Practical Framework

For any organization, quality decisions require economic thinking rather than moral imperatives. Several questions guide appropriate quality investment:

What’s the expected lifespan? For projects with a long shelf life, avoiding technical debt is crucial. Near end-of-life systems justify more expedient approaches.

What’s the cost of iteration? Web applications with easy release cycles can tolerate more quality debt than firmware requiring physical replacement.

What are the safety and regulatory requirements? Medical devices and financial systems demand different standards than internal tools.

What’s being tested? Business hypothesis validation can accept more rough edges than technical reliability demonstrations.

Who are the users? Early adopters are more forgiving than general market consumers.

The practical synthesis: quality can be deprioritized when:

- Genuinely validating business hypotheses (not building production systems)

- Facing genuine time-to-market constraints where delay equals significant revenue loss

- Building true throwaway prototypes with clear intent to discard

- Near system end-of-life

- Making deliberate and documented prudent tradeoffs with repayment plans

Quality should not be deprioritized when:

- The quick fix affects frequently-modified code (high interest payments)

- The team cannot reliably pay off debt (no refactoring practices or tests)

- Making reckless decisions without understanding consequences

- The product serves as market validation (low quality invalidates the test)

- Brand reputation is at stake

- Taking on “unfocused” debt through many tiny shortcuts

Conclusion: Quality as Competitive Advantage

The research yields several novel insights beyond conventional wisdom. First, the economic case for quality is stronger than the professional case—framing quality as an economic advantage wins more arguments than appealing to craftsmanship or pride. Second, the speed-quality tradeoff is largely illusory beyond the very short term; the crossover point where quality investment pays off arrives in weeks, not months or years. Third, process quality is foundational and underemphasized—it predicts code quality, system quality, and ultimately product quality.

The meta-principle from Hunt and Thomas deserves the final word: the choice isn’t quality versus speed. It’s which quality dimensions to prioritize for this context, with explicit documentation of tradeoffs and a plan for addressing them. Quality, properly understood, is not something you test after the fact—it’s something you build into how you work.

Key Sources and Further Reading

Foundational Works

- Martin Fowler: Is High Quality Software Worth the Cost?

- Martin Fowler: Technical Debt Quadrant

- Martin Fowler: Technical Debt

- Richard Gabriel: The Rise of “Worse is Better”

- Andy Hunt & Dave Thomas: Good-Enough Software

- The Pragmatic Programmer on Good-Enough Software

- Steve McConnell/Construx: Managing Technical Debt

DORA Research and DevOps Metrics

- DORA’s Software Delivery Performance Metrics

- Code Climate: What are DORA Metrics?

- Harness: Accelerating DevOps with DORA Metrics

- LinearB: DORA Metrics Guide

- DX: Complete Guide to DORA Metrics

- Atlassian: DORA Metrics

- Introduction to Accelerate and DORA Metrics

- 2024 State of DevOps Report Analysis

Technical Debt Research

- Wikipedia: Technical Debt

- Architecture Weekly: Tech Debt Doesn’t Exist, Trade-offs Do

- Full Scale: What Is Technical Debt?

- The Annual Cost of Technical Debt: $1.52 Trillion

- McKinsey: Tripped Up by Tech Debt

- McKinsey: Breaking Technical Debt’s Vicious Cycle

- Leanware: Technical Debt Management Best Practices

- Technical Debt and Productivity

- InfoQ: Technical Debt Isn’t Technical

- The Effects of Technical Debt on Morale

- CMU SEI: 5 Recommendations to Manage Technical Debt

- Velocity vs. Technical Debt for CTOs

- How to Calculate the Cost of Tech Debt

Cost of Poor Quality

- The True Cost of a Software Bug

- Average Cost of a Data Breach

- Cybersecurity Stats for SMBs

- True Cost of IT Downtime

- Cost of Downtime: Outages and Your Bottom Line

- 21 Downtime Statistics

- Developer Time Lost to Bad Code

Customer Experience and Business Impact

- 100 Stats on Customer Satisfaction

- Customer Experience Statistics: CX = Growth

- Customer Service Statistics and Benchmarks

- Software Developer Turnover Challenges

MVPs, Prototypes, and Quality Tradeoffs

- Wikipedia: Minimum Viable Product

- Wikipedia: Principle of Good Enough

- Wikipedia: Software Prototyping

- Jeff Atwood: The Prototype Pitfall

- Full Scale: MVP Guide

- OpenMind: What is MVP in Software Development?

- Hicron: What is a Minimum Viable Product?

- Speed, Quality, and Technical Debt

- Technical Debt is Soul-crushing

Internal vs. External Quality

- Mike Long: Internal and External Software Quality

- Matti Lehtinen: Internal vs. External Software Quality

- Abi Noda: Software Quality

- Developer Productivity for Humans, Part 7: Software Quality